Over the last few years, we’ve often talked about how we’re living in a golden age of artificial intelligence (AI). Ideas that seemed like science fiction not so long ago are now a reality—and there’s no better example of that than Alexa. What started as a sketch on a whiteboard has evolved into an entirely new computing paradigm—one that has fundamentally changed how people across the world interact with technology in their homes. Having passed half a billion devices sold, and with tens of millions of interactions every hour, Alexa has become part of the family in millions of households. We’ve always thought of Alexa as an evolving service, and we’ve been continuously improving it since the day we introduced it in 2014. A longstanding mission has been to make a conversation with Alexa as natural as talking to another human, and with the rapid development of generative AI, what we imagined is now well within reach. Today, we’re excited to share an early preview of what the future looks like.

This is an early look at a smarter and more conversational Alexa, powered by generative AI. It’s based on a new large language model (LLM) that’s been custom-built and specifically optimized for voice interactions, and the things we know our customers love—getting real-time information, efficient smart home control, and maximizing their home entertainment. We believe this will drive the future of Alexa, enabling us to enhance five foundational capabilities:

1. Conversation

We’ve studied a lot about conversation in the last few years, and we know that being conversational goes beyond words. In any conversation, we process tons of additional information, such as body language, knowledge of the person you’re talking with, and eye contact. To enable that with Alexa, we fused the input from the sensors in an Echo—the camera, voice input, its ability to detect presence—with AI models that can understand those non-verbal cues. We’ve also focused on reducing latency so conversations flow naturally, without pause, and responses are the right length for voice—not the equivalent of listening to paragraph after paragraph read aloud. When you ask for the latest on a trending news story, you get a succinct response with only the most relevant information. If you want to know more, you can follow-up.

2. Real-world utility

To be truly useful, Alexa has to be able to take action in the real world, which has been one of the unsolved challenges with LLMs—how to integrate APIs at scale and reliably invoke them to take the right actions. This new Alexa LLM will be connected to hundreds of thousands of real-world devices and services via APIs. It also enhances Alexa’s ability to process nuance and ambiguity—much like a person would—and intelligently take action. For example, the LLM gives you the ability to program complex Routines entirely by voice—customers can just say, “Alexa, every weeknight at 9 p.m., make an announcement that it’s bed time for the kids, dim the lights upstairs, turn on the porch light, and switch on the fan in the bedroom." Alexa will then automatically program that series of actions to take place every night at 9 p.m.

3. Personalization and context

An LLM for the home has to be personalized to you, and your family. Just as a conversation with another person would be shaped by context—such as your previous conversations or the situational context—Alexa needs to do the same. The next generation of Alexa will be able to deliver unique experiences based on the preferences you’ve shared, the services you’ve interacted with, and information about your environment. Alexa also carries over relevant context throughout conversations, in the same way that humans do all the time. People use pronouns, catchphrases, and build up context of the places, times, or scenes we talk about. Ask Alexa a question about a museum, and you’ll be able to ask a series of follow-ups about its hours, exhibits, and location without needing to restate any of the prior context, like the name or the day you plan to go.

4. Personality

Customers have told us time and again that they love Alexa’s personality. You don’t want a rote, robotic companion in your home, and I’d argue Alexa’s personality is one of the biggest reasons for Alexa’s broad adoption. As we’ve always said, the most boring dinner party is one where nobody has an opinion—and, with this new LLM, Alexa will have a point of view, making conversations more engaging. Alexa can tell you which movies should have won an Oscar, celebrate with you when you answer a quiz question correctly, or write an enthusiastic note for you to send to congratulate a friend on their recent graduation.

5. Trust

There should be no trade-off between trustworthiness and performance. Customers around the world have welcomed Alexa into their home, and to be truly useful in their daily lives, we must continue to create experiences that they both love and trust. While the integration of generative AI brings infinite new possibilities, our commitment to earning our customers’ trust will not change. As with all our products, we will design experiences to protect our customers’ privacy and security, and to give them control and transparency.

To our knowledge this is the largest integration of an LLM, real time services, and a suite of devices—and it’s not limited to a tab in a browser. And we’re just getting started—with generative AI, we’re also able to enhance a number of core components of the Alexa experience.

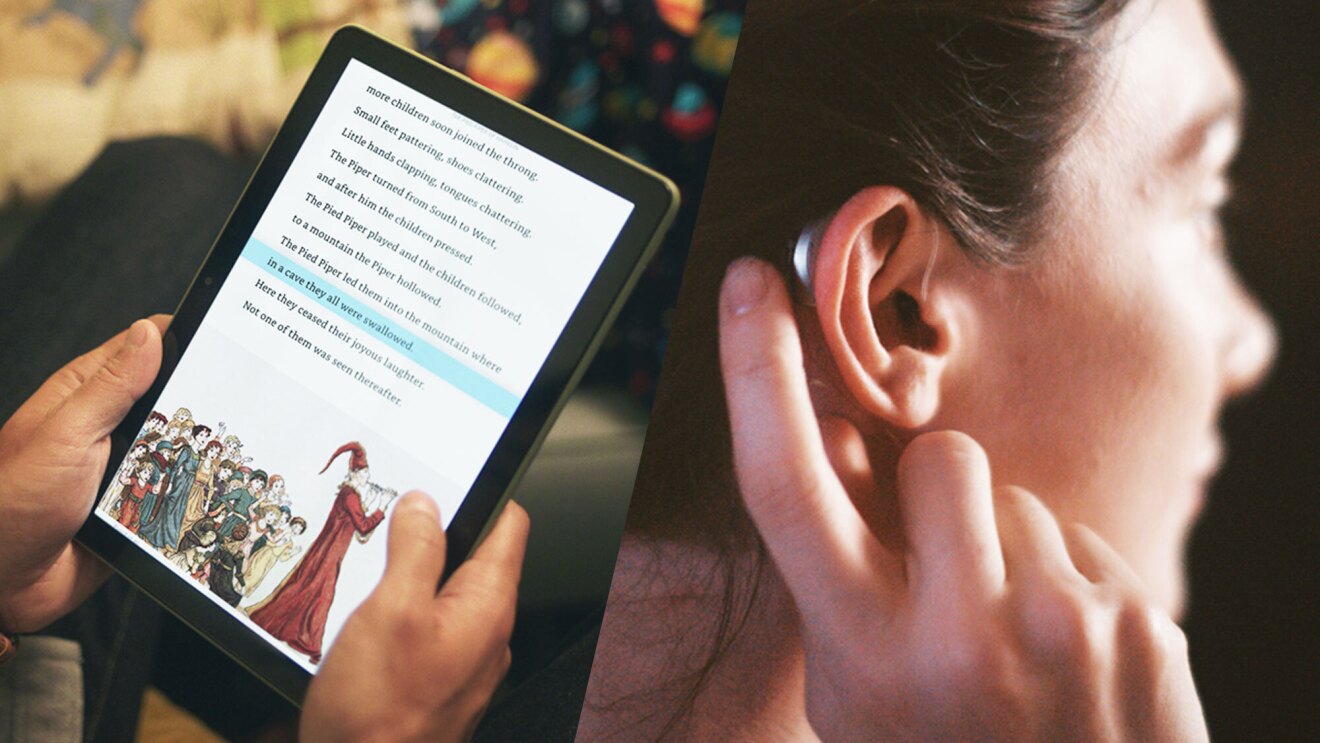

One of these components is how customers start an interaction with Alexa. This will build on the experience that exists today by enabling customers who choose to enroll in Visual ID to start a conversation with Alexa simply by facing the screen on an Echo Show—no wake word required. The result is the most natural conversation experience we’ve ever built. Secondly, we built a whole new conversational speech recognition (CSR) engine using large models. As humans, we often pause during conversation to gather our thoughts, or emphasize a point, and identifying those cues is incredibly hard for an AI. This new CSR engine is capable of adjusting to those common natural pauses and hesitation—enabling more flowing, natural conversation. Finally, generative AI has enabled us to enhance our text-to-speech technology, using a large transformer model to make Alexa much more expressive and attuned to conversational cues.

What this means is that Alexa will adapt to your cues and modulate its response and tone akin to human conversations. Ask Alexa if your team won, and it will respond in a joyful voice if so; if they lost, the response is more empathetic. Ask Alexa for an opinion, and the response will be more enthusiastic, as it would if a friend was sharing a point of view.

To demonstrate how far we’ve come, here’s a reminder of how Alexa sounded when we first launched:

And here is what Alexa will sound like early next year:

Combined, these enhancements will take what is already the world’s best personal AI, and make it even better. I’ve been using these new capabilities over the past few months, and it feels just as transformative as the first time I experienced talking to Alexa a decade or so ago. That’s not to say it’ll be perfect—Alexa will make mistakes—but, like it always has, the experience will continue to get better over time.

We’re at the start of a journey—a foundation that we believe will lead to a new version of Alexa powered by generative AI. We’ll continue to develop and add more capabilities as part of a free preview, which will be available to Alexa customers in the U.S. soon. We know customers will have lots of feedback, and we can’t wait to hear it.

Stay tuned for more. In the meantime, here is an early look at Alexa’s new capabilities.

Trending news and stories