Generative artificial intelligence (generative AI) has recently captured the world’s imagination with feats like summarizing text, writing marketing materials, and composing code. Today I want to tell you how, much before the current buzz around generative AI, we leveraged it to reimagine the future of convenience in shopping, entertainment, and access.

My team and I like to build experiences for customers that feel like magic. Amazon One, which combines cutting-edge biometrics, optical engineering, generative AI, and machine learning, fits the bill perfectly.

Amazon One is a fast, convenient, and contactless experience that enables customers to leave their wallets (and even their phones) at home. They can instead use the palm of their hand for everyday activities like paying at a store, presenting a loyalty card, verifying their age, or entering a venue.

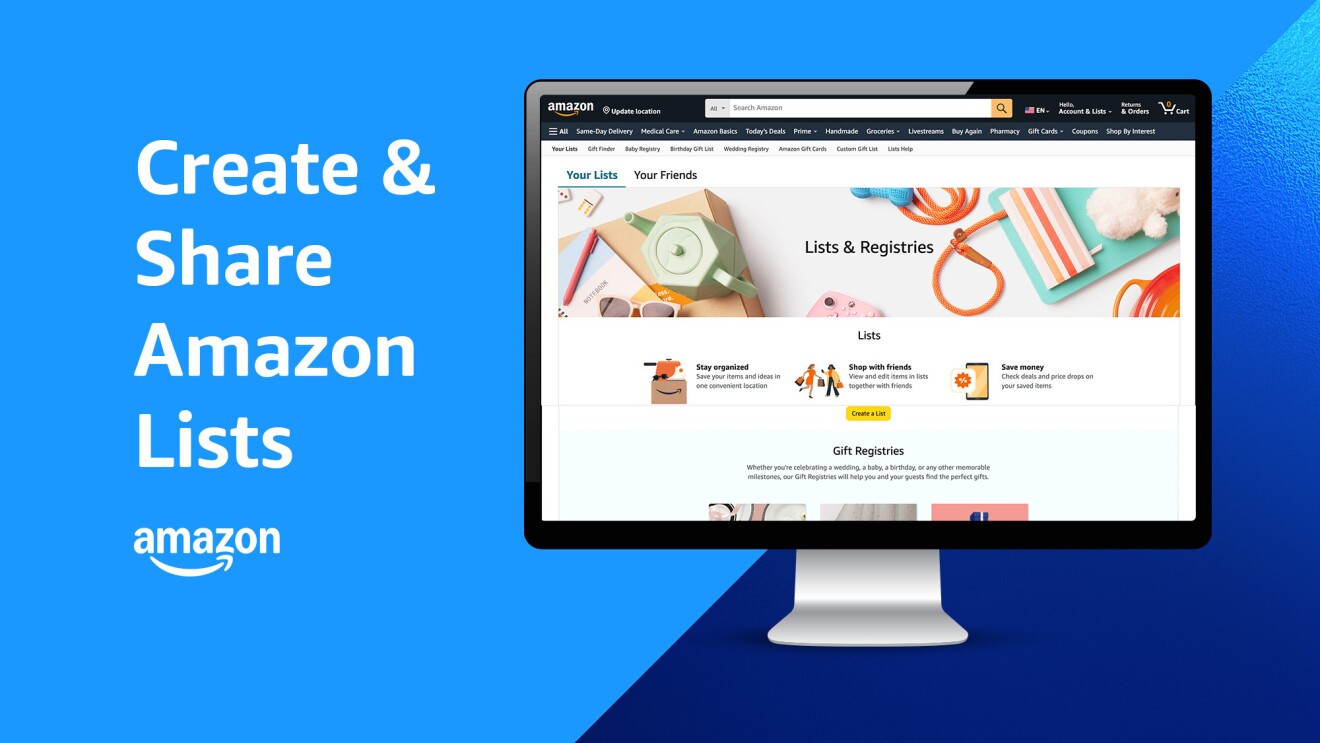

Currently, Amazon One is being rolled out to more than 500 Whole Foods Market stores and dozens of third-party locations, including travel retailers, sports and entertainment venues, convenience stores, and grocers. At each location, you will see a small Amazon One device—a type of scanner that uses infrared light—to recognize the unique lines, grooves, and ridges on your palm, and the pulsating network of veins just under the skin. The system uses this information to create your palm signature, or a unique numerical vector representation, and connects it to your credit card or your Amazon account.

But the magic and ease of the Amazon One experience belies the complexity of the problem we had to solve. Amazon One comes down to one simple point: the system can’t make a mistake.

Where generative AI comes in

In the decades I have spent studying, teaching, building, and training deep learning models, I have learned one thing: To be highly accurate, systems need a lot of good data. But when was the last time you’ve seen an image of a human palm? This left us in a pickle: How were we going to train a system where accuracy is paramount when we only had a small amount of palm data?

That’s when we decided to use generative AI. Generative AI is a subset of traditional machine learning powered by models trained on billions of data points from books, articles, pictures, and other sources. These include large language models (LLMs) like ChatGPT and “multi-modal” models trained on inputs like text, images, video, and sound. We used generative AI to create a “palm factory”—producing millions of synthetic images of palms—to train our AI model.

The industry term for this computer-generated information is “synthetic data,” new data created by the AI to replicate the breadth and variety of real data as closely as possible. This was pioneering work that happened years before the current generative AI craze started dominating conversations around the world.

Synthetic data boosts accuracy of Amazon One

Training Amazon One on millions of synthetically generated images of the palm and the vessels underneath allowed us to boost the system’s accuracy. To begin with, it quickly generated hands reflecting a myriad of subtle changes, like varying illumination conditions, hand poses, and even the presence of a Band-Aid. But that’s not all. The images were also automatically “annotated,” which is normally a long and laborious process. This saved time and allowed us to move faster, as we didn’t have to label the pictures and tell the computer that it was looking at the pattern lines of your palm, a scar, or a wedding band.

We also trained our system to detect fake hands, such as a highly detailed silicon hand replica, and reject them. Amazon One has already been used more than 3 million times with 99.9999% accuracy. The combination of the palm surface and subcutaneous images allowed us to build a system that’s 100 times more accurate than two irises.

By leveraging generative AI and synthetic data, we were able to solve a problem that’s much harder than, say, using your face to unlock your smartphone. That’s because if your face is on your phone, it already knows who you are and just verifies that it is you. We call this a one to one mapping. With Amazon One, we don't know who you are when you put your hand over the scanner. We need to identify you from other people, and do it fast. And if you are not enrolled, we also need to be able to say, you're not in the system. Amazon One does this and much more.

We have already expanded the applications of Amazon One beyond payment to loyalty linking and age verification—read about our work with customers like Panera and Coors Field.

Amazon One was also designed to protect customer privacy—the system operates beyond the normal light spectrum and cannot accurately perceive gender or skin tone. Amazon One also does not use palm information to identify a person, only to match a unique identity with a payment instrument. Learn more from my blog that addresses privacy in detail.

These are still the early days for Amazon One, and I’m excited to see the full potential of Amazon One reach more businesses and consumers.