No doubt, AI will fundamentally alter the customer experience. But it has also given rise to much debate. As Amazon CEO Andy Jassy shares in his latest letter to shareholders, there are key truths about the technology—and AWS’s role in this land rush—that are tough to debate. Here are six of them:

Every customer experience will be reinvented by AI, and there will be a slew of new experiences only possible because of AI. I’ve followed the public debate on whether this technology is over-hyped, whether we’re in “a bubble,” and if the margins and ROIC will be appealing. My strong conviction, at least for Amazon, is that the answers are no, no, and yes. Here are some truths that are hard to debate.

1/ We have never seen a technology more quickly adopted than AI. When ChatGPT launched in November 2022, it reached 100 million users in two months—four times faster than TikTok and 15 times faster than Instagram (ChatGPT already has over 900 million weekly active users). Both OpenAI and Anthropic have revenue run rates reportedly approaching $30 billion. These are breathtaking numbers for companies this soon after their commercial launches. When Edison opened his first commercial power station in 1882, most people understood it as a better way to light a room. What they couldn't see was that electricity would eventually reorganize every factory, home, and industry on Earth. AI may have comparable impact. The difference is that electricity took 40 years to get where it was going. AI appears to be moving ten times faster.

2/ Amazon is smack in the middle of this land rush, and companies are choosing AWS for AI. Three years after AWS launched commercially, it had a $58 million revenue run rate. Three years into this AI wave, AWS’s AI revenue run rate is over $15 billion in Q1 2026 (nearly 260 times larger than AWS at that same point)—and ascending rapidly.

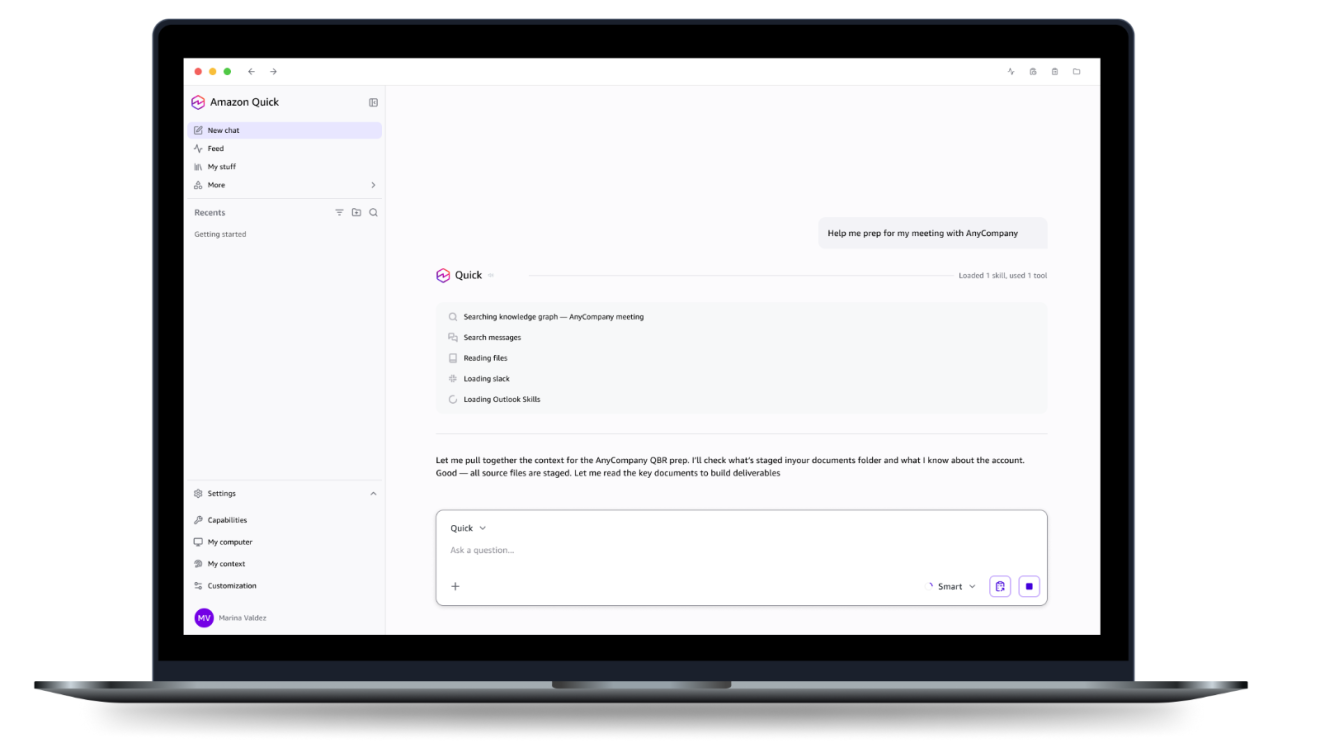

Customers are choosing AWS for AI for a few reasons. First, we have broader capabilities than others, with compelling offerings for model-building (SageMaker), high-performance inference with leading selection of frontier models (Bedrock), lower-cost inference (on our custom silicon, Trainium), agent-building (Strands), scalable and secure agent environments (AgentCore), and turnkey agents for coding, software migrations, and most tasks knowledge workers use in their daily routines (Kiro, Transform, and Quick). Second, as customers expand their use of AI, they want their inference to reside near their other applications and data (for latency reasons), and much more of it resides in AWS than anywhere else. Third, as customers expand their AI usage, they consume a lot of additional non-AI services, where AWS also has the broadest and most capable offerings. And fourth, AWS has the strongest security and operational performance of any AI and infrastructure provider. We spend a lot of time listening to customers, and they continue to remark about AWS’s advantaged performance as they increasingly move their AI to AWS.

3/ AWS could be growing even faster. AWS added 3.9 gigawatts (“GW”) of new power capacity in 2025, expects to double total power capacity by the end of 2027, and is monetizing that capacity as fast as it’s installed. In Q4 2025, AWS reported 24% YoY growth with a $142 billion dollar revenue run rate. That’s a lot of absolute growth. And yet, we still have capacity constraints that yield unserved demand. [As an aside, two large AWS customers have already asked if they could buy *all* of our Graviton instance capacity in 2026 (Graviton is our widely-adopted custom CPU chip)—we can’t agree to these requests given other customers’ needs, but it gives you an idea of the demand.]

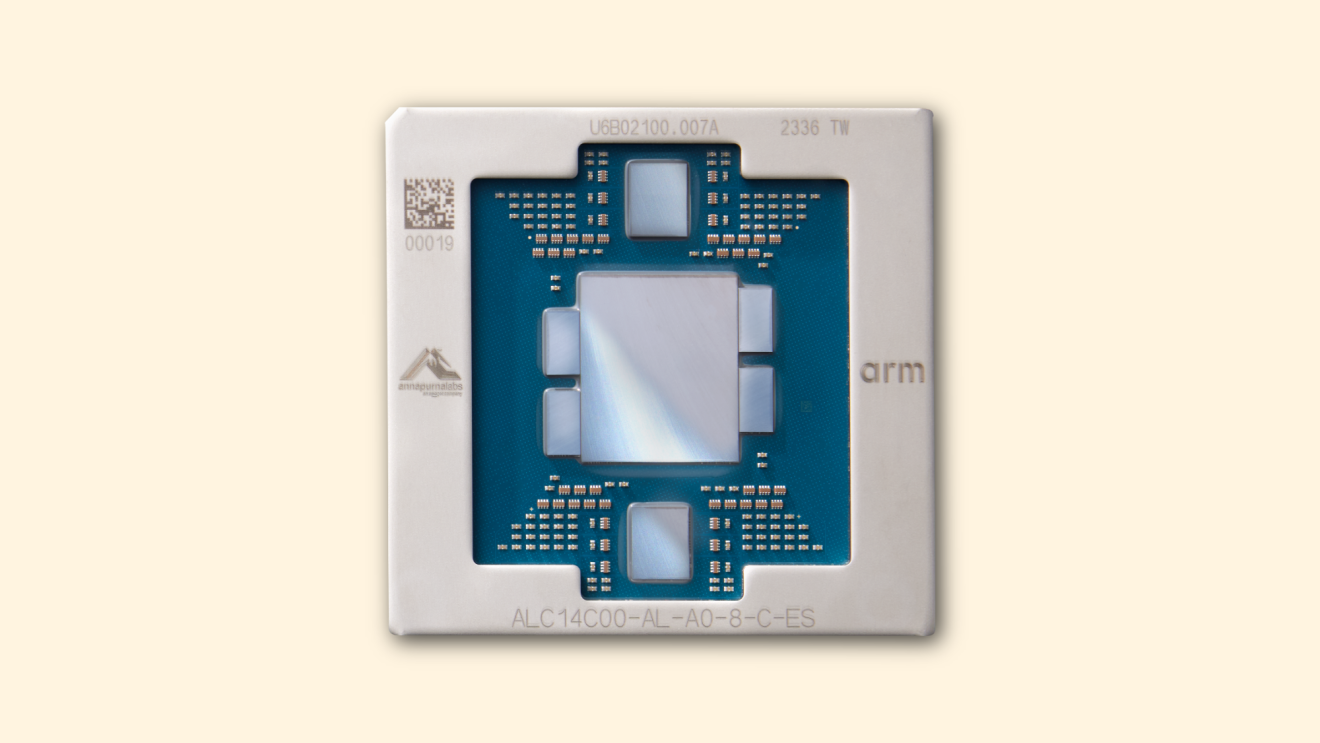

4/ Our chips business is on fire, changes the economics for AWS, and will be much larger than most think. Virtually all AI thus far has been done on NVIDIA chips, but a new shift has started. We have a strong partnership with NVIDIA, will always have customers who choose to run NVIDIA, and we will continue to make AWS the best place to run NVIDIA. However, customers want better price-performance. We’ve seen this movie before. In the CPU space, virtually all of the workloads ran on Intel chips until we invented Graviton in 2018. Graviton, which has up to 40% better price-performance than other x86 processors, is now used expansively by 98% of the top 1,000 EC2 customers. The same story arc is unfolding in AI. Our second version of our custom AI silicon (Trainium2) had about 30% better price-performance than comparable GPUs, and has largely sold out. Trainium3, which just started shipping at the start of 2026 and is 30-40% more price-performant than Trainium2, is nearly fully-subscribed. A significant chunk of Trainium4, which is still about 18 months from broad availability, has already been reserved. And, Amazon Bedrock, AWS’s primary (and very fast-growing) inference service, runs most of its inference on Trainium. Demand for Trainium is booming.

Having our own hotly demanded AI chip opens up many possibilities, but perhaps none larger than the ability to lower costs for customers and secure better economics for AWS. At scale, we expect Trainium will save us tens of billions of capex dollars per year, and provide several hundred basis points of operating margin advantage versus relying on others’ chips for inference.

Our annual revenue run rate for our chips business (inclusive of Graviton, Trainium, and Nitro—our EC2 NIC) is now over $20 billion, and growing triple digit percentages YoY. To dimensionalize this versus other chips companies, that run rate is somewhat understated by our currently only monetizing our chips through EC2. If our chips business was a stand-alone business, and sold chips produced this year to AWS and other third parties (as other leading chips companies do), our annual run rate would be ~$50 billion. There’s so much demand for our chips that it’s quite possible we’ll sell racks of them to third parties in the future.

5/ The way AWS’s cash cycle works is that the faster AWS grows, the more short-term capex we’ll spend. AWS has to lay out cash for land, power, buildings, chips, servers, and networking gear in advance of when we can monetize it (typically 6-24 months before we start billing customers, depending on the component). However, these capex investments fund assets with many-year useful lives (30+ years for datacenters; 5-6 years for chips, servers, and networking gear). The FCF and ROIC for these investments are cumulatively quite attractive a couple years after being in service; however, in times of very high growth (like now), where the capex growth meaningfully outpaces the revenue growth, the early-years FCF is challenged until these initial tranches of capacity are being monetized and revenue growth out-paces capex growth. We’ve been through this cycle with the first big AWS growth wave, and liked the results. We expect to feel similarly about this next wave, with much larger potential downstream revenue and FCF.

6/ We have customer commitments that make our capex investments predictable. We’re not investing approximately $200 billion in capex in 2026 on a hunch. The recent OpenAI commitment (over $100 billion) is an example of this, but there are several other customer agreements completed (and unannounced), or deep in process. Of the AWS capex we expect to spend in 2026, much of which will be monetized in 2027-2028, we already have customer commitments for a substantial portion of it.

We are willing to make large capex investments and endure short-term FCF headwinds for the substantial medium to long-term FCF surplus. AI is a once-in-a-lifetime opportunity where the current growth is unprecedented and the future growth even bigger. AWS has a significant leadership position with the broadest functionality, strongest security and operational performance, largest share of customers and revenue, strong desire from customers to run their AI in AWS, and an opportunity to build what could be a new pillar for Amazon in chips. We’re not going to be conservative in how we play this—we’re investing to be the meaningful leader, and our future business, operating income, and FCF will be much larger because of it.

Trending news and stories

- Amazon collaborates with NVIDIA on advanced AI assistants for cars

- Amazon launches Health AI agent on Amazon website and app with free 24/7 access to virtual care for Prime members

- Amazon introduces feeds to make it easier for merchants to reach more customers through AI-powered Shop Direct

- Andy Jassy shares 9 things he’s learned over 28 years at Amazon