Key takeaways

- AWS invested $110 million to give university researchers access to purpose-built AI chips.

- AWS Trainium is speeding up AI research at UC Berkeley, MIT, Carnegie Mellon, and more.

- All research is open source, meaning improvements flow back to the broader developer community.

Writing code that fully unlocks the performance of purpose-built AI chips is one of AI infrastructure's most persistent challenges—one that impacts outcomes in healthcare, computer science, and everything else AI now touches. Many developers have only ever had experience with GPUs, which limits their ability to tailor their applications to AI chips built specifically for AI workloads.

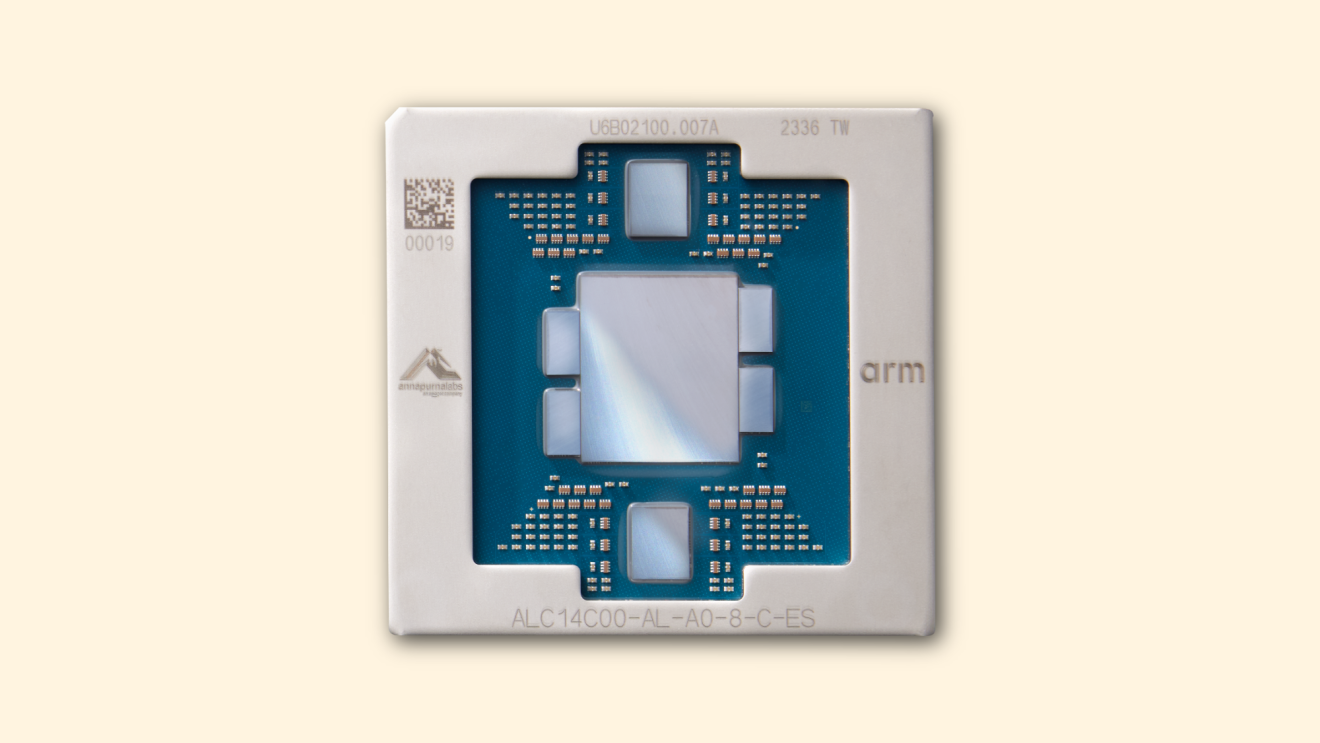

Amazon is working to change that by investing in the people who will shape the future of AI: the next generation of AI researchers. Through Build on Trainium, a $110 million program, Amazon gives students and faculty access to Trainium—its purpose-built chip designed for training and serving frontier AI models and applications. The program provides dedicated computing clusters, tools, and direct access to Amazon’s own data scientists and Neuron experts.

Since launching, the program has engaged more than 10,000 students across dozens of research proposals, which tackle some of the biggest challenges in machine learning, computer science, healthcare, and quantum computing.

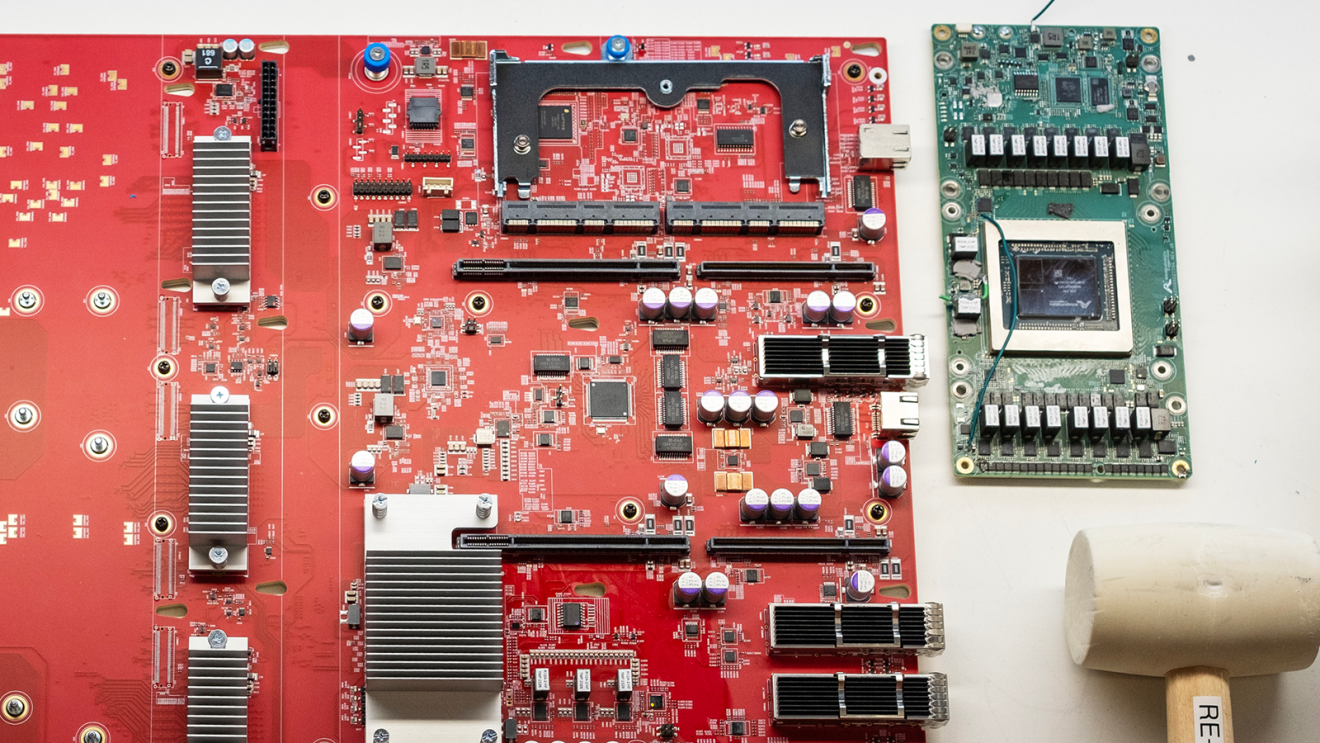

AWS Trainium2 chip

AWS Trainium2 chipUC Berkeley teams are building optimized kernels to address the most complex machine learning challenges

Two UC Berkeley teams sit at the center of Build on Trainium’s early work. Both are tackling the same core challenge—building optimized kernels, the low-level computational routines that determine how efficiently a chip executes AI workloads—but from different angles.

Professor Christopher Fletcher's team built TeAAL, a programming framework that lets developers describe what they want to compute rather than specifying how the hardware should execute it. TeAAL handles the translation, automatically generating optimized low-level code for Trainium.

In testing, the approach ran 1.4 to 1.6 times faster than standard methods when applied to LoRA, one of the most widely used techniques for fine-tuning large AI models. The framework has earned recognition from IEEE Micro, one of the field's leading publications.

Fireside chat moderated by Nafea with UC Berkeley professors (Christopher Fletcher, Sophia Shao, Alvin Cheung) and PhD students, followed by student research presentations on Trainium's open architecture via the Neuron Kernel Interface (NKI).

Fireside chat moderated by Nafea with UC Berkeley professors (Christopher Fletcher, Sophia Shao, Alvin Cheung) and PhD students, followed by student research presentations on Trainium's open architecture via the Neuron Kernel Interface (NKI).Professors Sophia Shao and Alvin Cheung are taking a different approach. Their system, Autocomp, uses large language models to automatically generate and optimize the low-level code that runs on Trainium—essentially using AI to extract the full potential from AI hardware. Where TeAAL gives developers an easier way to write code themselves, Autocomp automates the entire process, iterating on code using real hardware performance feedback that doesn’t require manual tuning.

The next phase of the Autocomp project will extend the system from individual operations to full end-to-end pipelines for models like Llama and Qwen, running on Trainium's most powerful configurations.

How other American universities are using Trainium

At Carnegie Mellon University, MIT, UCLA, and the University of Illinois Urbana-Champaign, among others, researchers are using Trainium to accelerate discovery across fields that stand to benefit from more computational muscle.

"The Build on Trainium program has given our lab access to thousands of next-generation AI chips, allowing us to tackle research problems that were previously out of reach—while giving students hands-on experience with the same infrastructure powering today's largest AI models," said Minjia Zhang, assistant professor at the University of Illinois Urbana-Champaign. "We're focused on making AI training faster and more efficient, and we're excited to share what we learn with the broader research community."

At Carnegie Mellon University, a team in the Catalyst research group is optimizing FlashAttention, an algorithm that dramatically speeds up AI training and performance by reducing how often data moves between different parts of a chip's memory.

"What amazed us most was the speed at which we could iterate," said Todd Morry, a computer scientist at Carnegie Mellon. "We achieved meaningful improvements on top of the prior state of the art in just a week."

Trainium is accelerating research from healthcare to quantum computing

At MIT, researcher Brian Anthony is using Trainium to train AI models for 3D ultrasound image analysis, a field where faster model training can translate directly to better patient care. His team achieved 50% higher throughput while training their models on Trainium compared with traditional GPUs, reducing total training time from months to weeks.

And at UCLA, Professor Jens Palsberg is using the program for a different purpose entirely: simulating quantum circuits, a core challenge in quantum computing research and education.

"The project brought together a strong group of students who collaboratively built a high-performance simulator, enabling deeper experimentation and hands-on learning at a scale that simply wasn't possible before," Palsberg said.

Annapurna Labs’ engineers design and test custom silicon hardware in Austin, Texas.

Annapurna Labs’ engineers design and test custom silicon hardware in Austin, Texas.Open source is critical to advancing research for all AI developers

A key enabler of this work is the Neuron Kernel Interface, or NKI, which gives researchers low-level access to Trainium's architecture. That level of visibility is rare in academic settings and allows students to build solutions that can outperform standard software libraries and unlock more of AI chips’ potential.

The program creates a productive feedback loop while accelerating research. The tools, optimizations, and techniques these teams develop make Trainium more capable and accessible for every developer who builds on it. All teams in the program plan to release their work as open source software and publish findings at top research venues, meaning the benefits extend well beyond any single lab or institution.

By investing in the next generation of AI researchers, Amazon isn't just building a better chip. It's lowering the barrier for every developer who comes after them, working on the next big breakthrough in healthcare, AI agents, robotics, and beyond.

Trending news and stories