Key takeaways

- AI chips are the foundation of every AI experience, from chatbots to personalized recommendations.

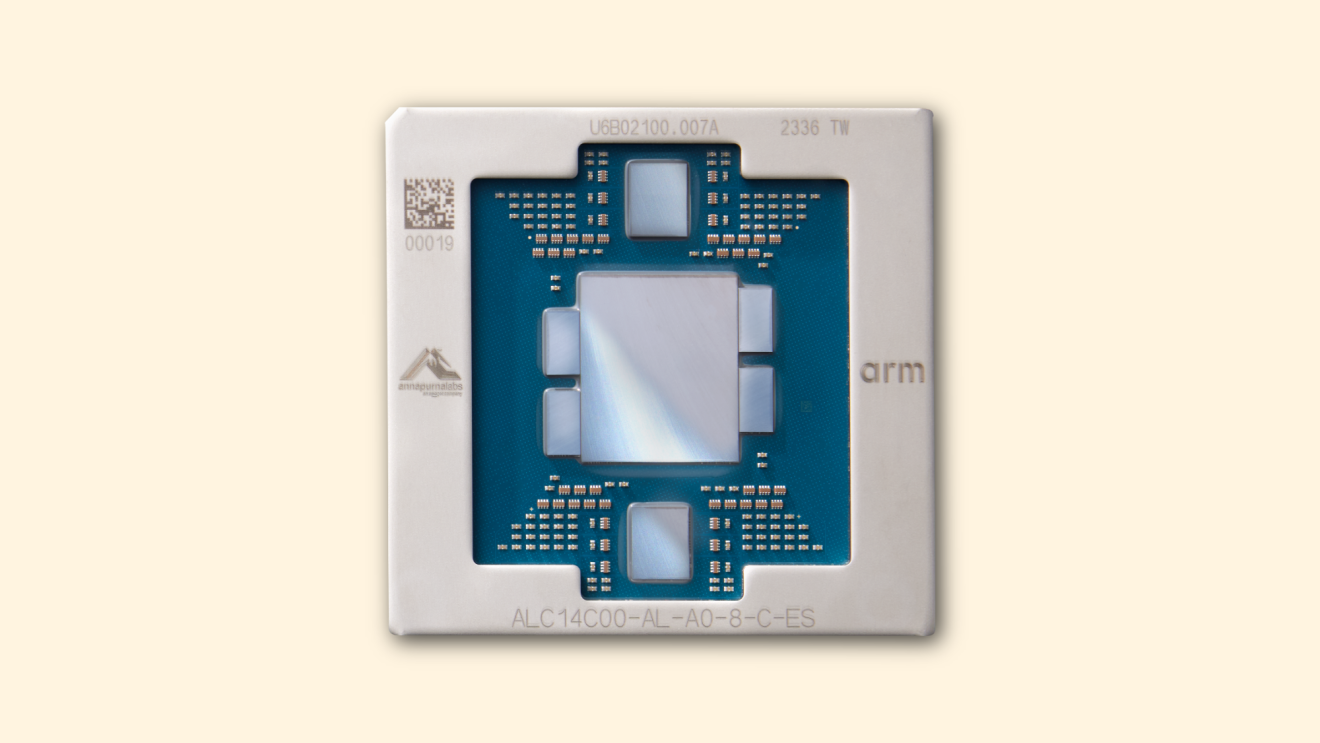

- AWS Trainium and Graviton deliver more performance per dollar than comparable alternatives for their respective workloads.

- Amazon's custom silicon business has surpassed a $20 billion annual revenue run rate.

Page overview

AI Accelerator

A broad category of chips built specifically for AI workloads rather than general computing. Because AI tasks like training and inference have unique demands, purpose-built accelerators can deliver significantly better performance and efficiency than general-purpose chips. AWS Trainium chips are an example, designed from the ground up to provide high performance at lower cost for generative AI workloads.

A group of chips and servers connected together to function as a single, powerful system. Training a frontier AI model requires far more computing power than any single chip can provide, so thousands of chips are linked together in clusters that communicate at ultra-high speeds. Amazon’s Project Rainier is the world’s largest AI computing cluster—the size and efficiency of these clusters are a major factor in how quickly and cost-effectively a model can be trained.

The general-purpose "brain" of a computer, designed to handle a wide range of tasks from running applications to managing operating systems. As AI systems grow more complex, especially with the rise of agentic AI, CPUs are increasingly critical for orchestrating workloads across an entire system. Amazon designed its Graviton processors for cloud computing, and the latest generation delivers up to 25% higher performance than its predecessor.

A chip originally designed for rendering graphics, now widely used for AI because it can perform many calculations at once—a capability known as parallel processing. GPUs became the go-to hardware for training large AI models because of their ability to crunch massive amounts of data simultaneously. But they're just one part of the AI compute stack. As workloads diversify, the industry is moving toward a broader mix of specialized hardware.

The process where a trained AI model applies what it has learned to generate outputs, like answering a question, translating a sentence, or creating an image. Training is how an AI learns, but inference is how it puts that training into practice. Every time you interact with a chatbot or receive a personalized recommendation, inference is happening behind the scenes. The requirements for inference are very different from training: speed and cost-per-query matter enormously, which is why chips optimized specifically for inference are increasingly important.

A measure of how much computing power you get for every dollar spent. In the AI chip world, this is one of the most important metrics. Two chips might deliver similar raw performance, but if one costs significantly less to run, it offers better price performance. This is a key reason companies are adopting purpose-built silicon like Trainium and Graviton, which are designed to deliver more output per dollar than general-purpose alternatives.

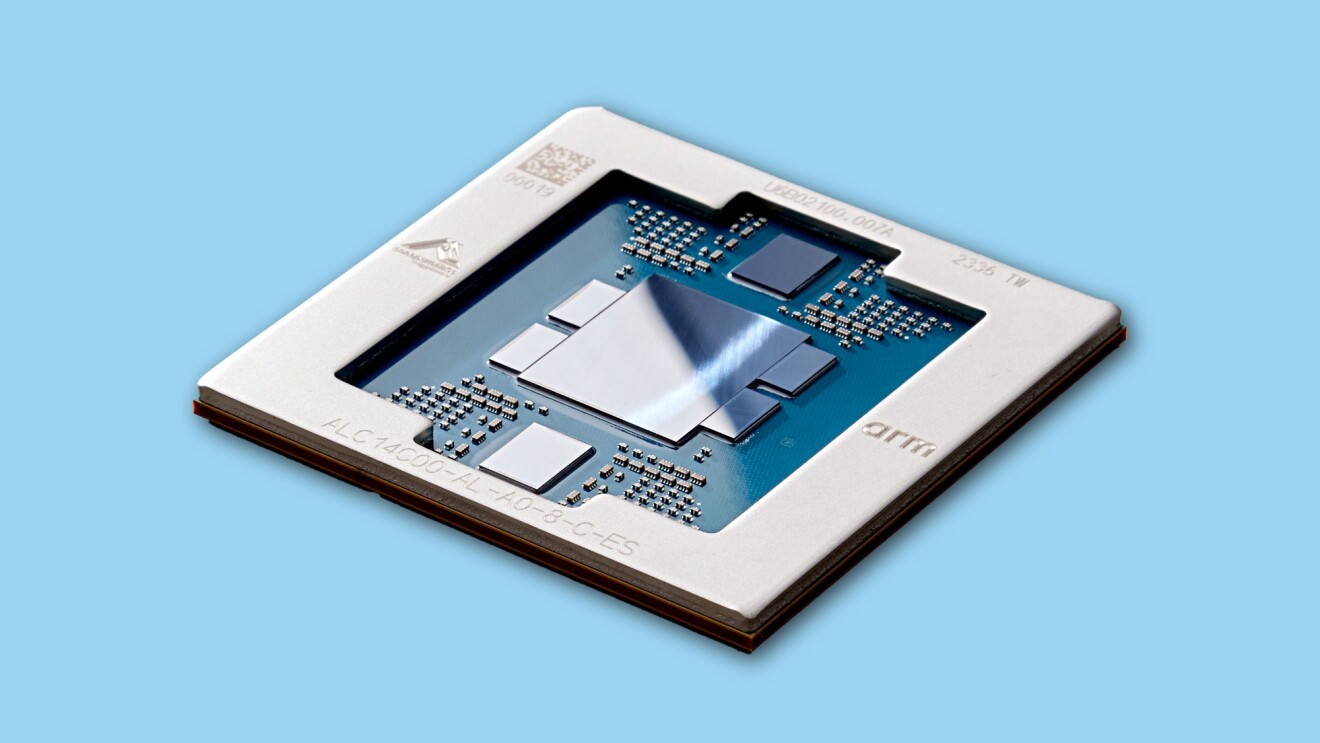

Chips designed from the ground up for specific workloads rather than trying to be good at everything. This is one of the most important shifts happening in AI infrastructure. Instead of relying solely on one-size-fits-all hardware, companies are increasingly turning to specialized chips that optimize for performance, efficiency, and cost. Amazon designs Graviton for general cloud compute and Trainium for AI training and inference, and its custom silicon business recently surpassed a $20 billion annual revenue run rate.

How many AI requests or operations a system can handle at the same time. High throughput is what allows AI to scale to millions of users. A single popular AI application might need to process thousands of requests per second. Purpose-built chips are designed to maximize throughput without ballooning costs, which is especially important as more businesses integrate AI into their products and services.

The process of teaching an AI model by feeding it massive datasets so it can learn patterns, relationships, and how to make predictions. Training is one of the most compute-intensive workloads in technology: building a frontier AI model can require thousands of chips running for weeks or months. This is where purpose-built silicon makes a real difference. Anthropic, for example, currently uses more than one million Trainium2 chips to train and serve its Claude models.

A general term for any computing task or set of tasks that a chip is asked to perform. In AI, different workloads have very different demands. Training a model, running inference, and orchestrating AI agents each stress different parts of the hardware in different ways. This is why the industry is shifting away from one chip for everything and toward matching the right chip to the right workload.

Trending news and stories

- Amazon rolls out 30-minute delivery on thousands of groceries and essentials

- Amazon Pet Days 2026: 65 top deals on pet food, toys, grooming, and health supplies to shop now

- How Amazon Think Big Spaces has 100,000 students participating in STEM education programs

- Amazon Leo mission updates: 300+ satellites deployed following back-to-back Atlas V, Ariane 6 launches