Key takeaways

- The deployment starts with tens of millions of Graviton cores, with the potential to expand.

- Meta is now one of the largest Graviton customers in the world.

- The deal builds on Meta's long-standing AWS relationship and use of Amazon Bedrock at scale to support its next generation of AI.

Meta has signed an agreement to deploy AWS Graviton processors at scale. The deal marks a significant expansion of a long-standing partnership between the two companies as Meta builds its next generation of AI.

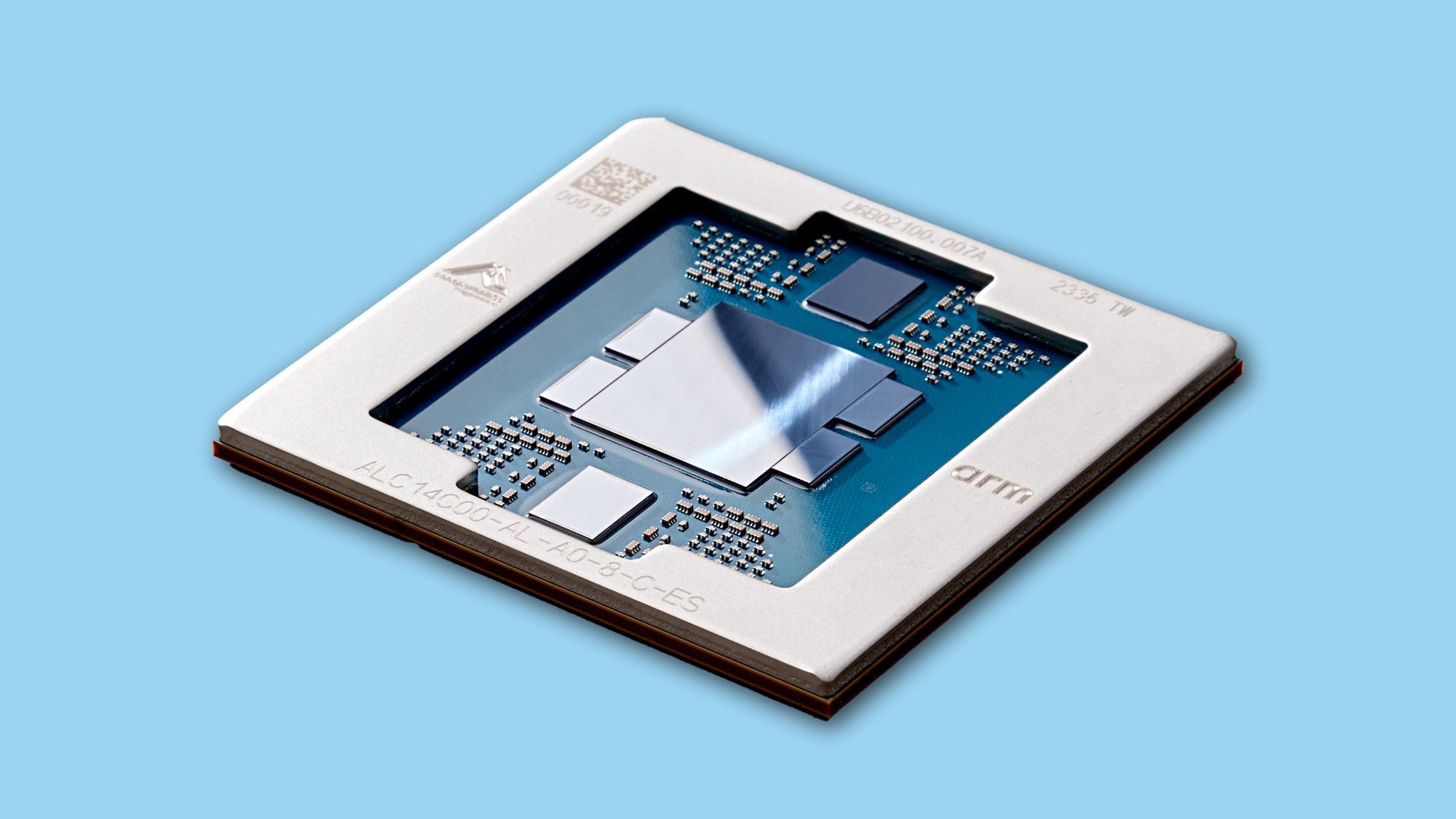

The deployment starts with tens of millions of Graviton cores, with the flexibility to expand as Meta's AI capabilities grow. The deal reflects a shift in how AI infrastructure gets built: while GPUs remain essential for training large models, the rise of agentic AI is creating massive demand for CPU-intensive workloads—real-time reasoning, code generation, search, and orchestrating multi-step tasks. Graviton5 is purpose-built for these workloads, giving Meta the processing power to run them efficiently at scale.

The chips will power various workloads at Meta, including supporting the company’s AI efforts. That work requires infrastructure that can handle billions of interactions while coordinating complex, multi-step agent workflows—exactly the kind of CPU-intensive work Graviton is designed for.

AWS Graviton chips powering AI workloads

As organizations increasingly adopt agentic AI—autonomous systems that can reason, plan, and complete complex tasks—the demand for high-performance, energy-efficient compute infrastructure has never been greater. Meta is building at the forefront of agentic AI, and its broad Graviton deployment reflects a simple reality: agentic workloads like code generation, real-time reasoning, and frontier model training are CPU-intensive, and purpose-built chips are the most efficient way to power them.

The Graviton5 chip features 192 cores and a cache that is five times larger than the previous generation, which reduces delays in how quickly those cores communicate with each other by up to 33%. That means faster data processing with greater bandwidth—key requirements for agentic AI systems that need to continuously reason through and execute multi-step tasks.

Graviton is built on the AWS Nitro System, which uses dedicated hardware and software to deliver high performance, high availability, and high security. The Nitro System enables bare-metal instances for direct access to the hardware while providing the same familiar Elastic Network Adapter (ENA) and Amazon Elastic Block Store (Amazon EBS) devices that allow Meta to run its own virtual machines without performance compromises.

The range of Graviton5 instances also supports the Elastic Fabric Adapter (EFA), enabling low-latency, high-bandwidth communication between instances. This is essential for Meta’s agentic AI workloads, where large-scale tasks need to be distributed across many processors working in coordination.

As a longtime AWS customer, Meta has relied on AWS's highly scalable and secure cloud infrastructure to power its global businesses.

“This isn't just about chips; it's about giving customers the infrastructure foundation, as well as data and inference services, to build AI that understands, anticipates, and scales efficiently to billions of people worldwide," said Nafea Bshara, vice president and distinguished engineer, Amazon. “Meta's expanded partnership, deploying tens of millions of Graviton cores, shows what happens when you combine purpose-built silicon with the full AWS AI stack to power the next generation of agentic AI.”

“As we scale the infrastructure behind Meta's AI ambitions, diversifying our compute sources is a strategic imperative. AWS has been a trusted cloud partner for years, and expanding to Graviton allows us to run the CPU-intensive workloads behind agentic AI with the performance and efficiency we need at our scale,” said Santosh Janardhan, head of infrastructure, Meta.

Energy efficiency benefits of Graviton

AWS Graviton5 is built on 3-nanometer chip technology—a manufacturing process that produces smaller, more efficient processors. Because AWS designs its chips from the ground up and controls the full process from chip design through server architecture, it can optimize performance and efficiency in ways that off-the-shelf processors can't match.

The result is infrastructure that delivers stronger performance while maintaining leading energy efficiency, helping Meta pursue ambitious AI goals while staying on track with sustainability targets. Graviton5 delivers up to 25% better performance than the previous generation.

As the demand for AI compute grows across the industry, the efficiency of the underlying infrastructure becomes increasingly important—both for managing costs and reducing environmental impact.

The deal signals a new chapter in how large-scale AI infrastructure gets built—and how purpose-built chips like Graviton can help companies like Meta deliver smarter, more personalized experiences to billions of people worldwide.

Trending news and stories

- AWS introduces Graviton5: the company’s most powerful and efficient CPU

- Amazon data center communities: Here’s what’s happening near data centers across the US

- Introducing Vulcan: Amazon's first robot with a sense of touch

- 8 visual search features that help you quickly find what you’re looking for on Amazon