Swami Sivasubramanian, vice president of Database, Analytics, and Machine Learning at Amazon Web Services

Swami Sivasubramanian, vice president of Database, Analytics, and Machine Learning at Amazon Web Services01 / 03

Page overview

AWS expands Amazon Bedrock with new model provider and additional FMs

Since model choice is paramount, Amazon Bedrock is expanding to include the addition of Cohere as an FM provider, and the latest FMs from Anthropic and Stability AI. Cohere will add its flagship text generation model, Command, as well as its multilingual text understanding model, Cohere Embed. Additionally, Anthropic has brought Claude 2, the latest version of their language model, to Amazon Bedrock, and Stability AI announced it will release the latest version of Stable Diffusion, SDXL 1.0, which produces improved image and composition detail, generating more realistic creations for films, television, music, and instructional videos. These FMs join AWS’s existing offerings on Amazon Bedrock, including models from AI21 Labs and Amazon, to help meet customers where they are on their machine learning journey, with a broad and deep set of AI and ML resources for builders of all levels of expertise.

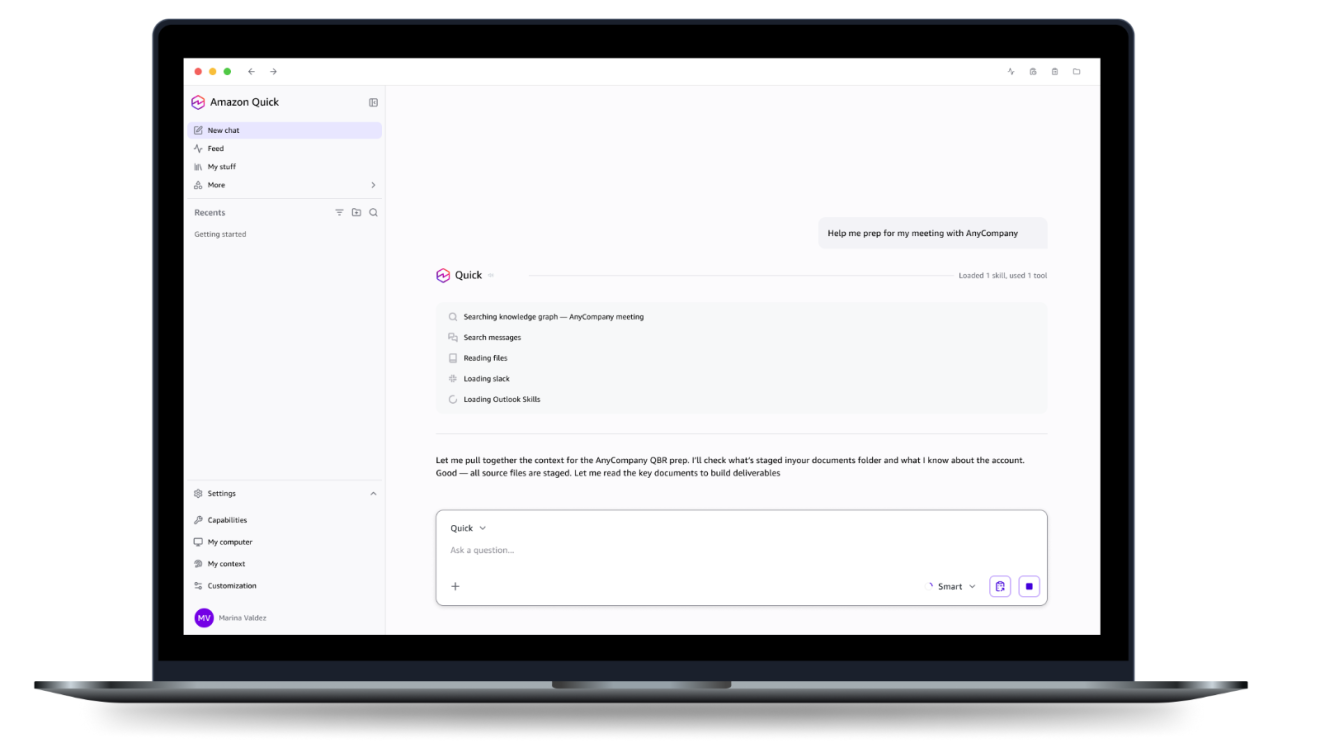

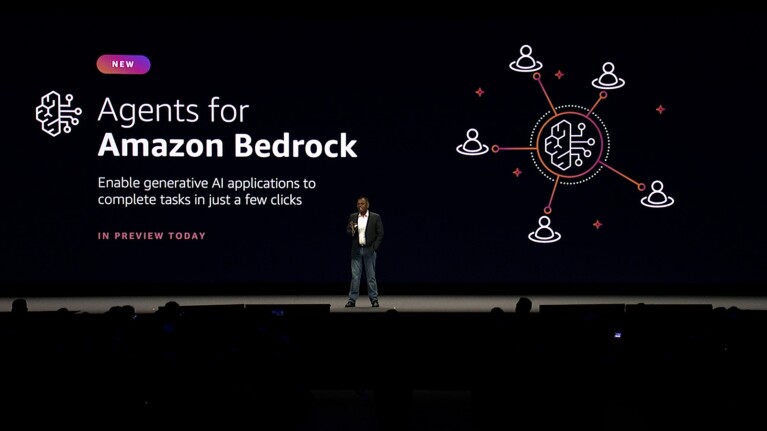

While FMs are incredibly powerful on their own for a wide range of tasks, like summarization, they need additional programming to execute more complex requests. For example, they don’t have access to company data, like the latest inventory information, and they can’t automatically access internal APIs. Developers spend hours writing code to overcome these challenges. With just a few clicks, agents for Amazon Bedrock will automatically break down tasks and create an orchestration plan—without any manual coding, making the task of programming generative AI applications easier for developers. For example, to service a customer request to return a pair of shoes—“I want to exchange these black shoes for a brown pair instead”—the agent securely connects to company data, automatically converts it into a machine-readable format, provides the FM with the relevant information, and then calls the right set of APIs to service this request.

Vector embeddings allow machines to understand relationships across text, images, audio, and video content in a format that’s digestible for ML—making everything from online product recommendations to smarter search results work. Now, with vector engine support for Amazon OpenSearch Serverless, developers will have a simple, scalable, and high-performing solution to build ML-augmented search experiences and generative AI applications without having to manage a vector database infrastructure.

Amazon QuickSight is a unified business intelligence service that helps organizations’ employees easily find answers to questions about their data. Now, QuickSight is combining its existing ML innovations with new LLM capabilities available through Amazon Bedrock to provide generative AI capabilities—called generative BI. These capabilities will help break down siloes, making it even easier to collaborate on data across an organization and speeding up data-driven decision making. Using everyday natural language prompts, analysts will be able to author or fine-tune dashboards, and business users will be able to share insights with compelling visuals within seconds.

Updating electronic health records is one of the most cumbersome tasks for doctors and nurses. Clinicians will find relief when this HIPAA-eligible service empowers health care software vendors to more easily build clinical applications that leverage generative AI. HealthScribe uses speech recognition and Amazon Bedrock–powered generative AI to create transcripts and generate easy-to-review clinical notes, with built-in security and privacy features designed to protect sensitive patient data.

These Amazon EC2 P5 instances—now generally available—are powered by NVIDIA H100 Tensor Core GPUs, which are optimized for training LLMs and developing generative AI applications. (An “instance” in cloud lingo is virtual access to a compute resource—in this case, compute powered by H100 GPUs.) AWS is the first leading cloud provider to make NVIDIA’s highly sought-after H100 GPUs generally available in production. These instances are ideal for training and running inference for the increasingly complex LLMs and compute-intensive generative AI applications, including question answering, code generation, video and image generation, speech recognition, and more. With access to H100 GPUs, customers will be able to create their own LLMs and FMs on AWS faster than ever.

More than 75% of organizations plan to adopt big data, cloud computing, and AI in the next five years, according to the World Economic Forum. To help people train for the AI and ML jobs of the future, AWS released on-demand skills trainings to support those who want to understand, implement, and begin using generative AI. Amazon has designed training courses specifically for developers who want to use Amazon CodeWhisperer, engineers and data scientists who want to leverage generative AI by training and deploying FMs, executives seeking to understand how generative AI can address their business challenges, and AWS Partners helping their customers harness generative AI’s potential.

Trending news and stories